Review Article - Journal of Neuroinformatics and Neuroimaging (2018) Volume 3, Issue 1

Musicality: Recent insights from imaging.

Andrew Mowat*

Ear Institute, UCL Institute of Education, London, UK

- *Corresponding Author:

- Andrew Mowat

Ear Institute

UCL Institute of Education

London

UK

Tel: 07903910917

E-mail: andrewmowat@live.com

Accepted date: January 25, 2018

Citation: Mowat A. Musicality: Recent insights from imaging.. Neuroinform Neuroimaging 2018;3(1):1-5.

Abstract

The composition and performance of music requires the interpretation of subtle changes of pitch. With training, appreciation and comprehension of tone improves to a point where notes can be recognized with or without a reference tone. Modern imaging modalities have displayed some of the changes that occur in neural networks. However, often differences are innate rather than acquired. The distinction is difficult, as elite musicians display a blend of talent and dedication. This review will analyze factors that influence personal musicality, and the relationship between music and language. A suggestion is made about how further insights into the field can be made.

Keywords

Musicality, Pitch, Imaging, Language, Composition.

Introduction

Relative pitch (RP), is the ability to identify the interval between notes, it is almost universal amongst elite musicians, and is acquired early during training.

Absolute pitch (AP) describes a rare phenomenon in which a tone can be identified, without reference. It has a population prevalence of 1:10,000, but is significantly over represented amongst musicians. Many classical composers, notably Wolfgang Mozart, displayed the talent.

Musicians with RP, who do not have AP by adulthood will never progress to true AP, but with training may identify tones in a similar manner, ‘pseudo absolute pitch’. This requires dedication, and is quickly lost without maintenance.

Before AP musicians can be presented as case studies of the musicality, we must assess whether it is inherited or acquired.

At the turn of the twentieth century, it was proposed that AP was a product of environment. Specifically, infant preponderance for AP was ‘unlearnt’ by the majority, as early sound exposure is not conducive to its maintenance [1].

Appropriate early musical training is crucial. Children who began music training before the age of 5 years, and who had an analytical cognitive style were much more likely to develop AP [2]. Furthermore, infants are more likely to track patterns of absolute than relative pitch, implying a preference for this pattern from birth [3].

Alternatively, the ‘giftedness’ model sees AP as an inherited genetic trait. Proponents suggest a number of subtypes exist with subtlety different neurobiological mechanisms. Survey results of college music students are supportive. Amongst those who possessed AP, 48% had a first degree with AP, versus only 14% of non-AP possessors [4]. A genome wide linkage analysis study of 73 families with multiple AP members showed multiple independent peaks, suggesting genetic heterogeneity. In European families, the strongest association was with the locus 8q24.21 [5].

A new identification paradigm, which is independent of a subject’s musical experience, is supportive of the giftedness model. It shows AP exists in high levels in non-musical populations. When used to test children with minimal musical experience the majority perform poorly. Rarely, a child performs comparably to an adult with AP. It is hypothesized that such children are candidates to progress to AP with further training [6].

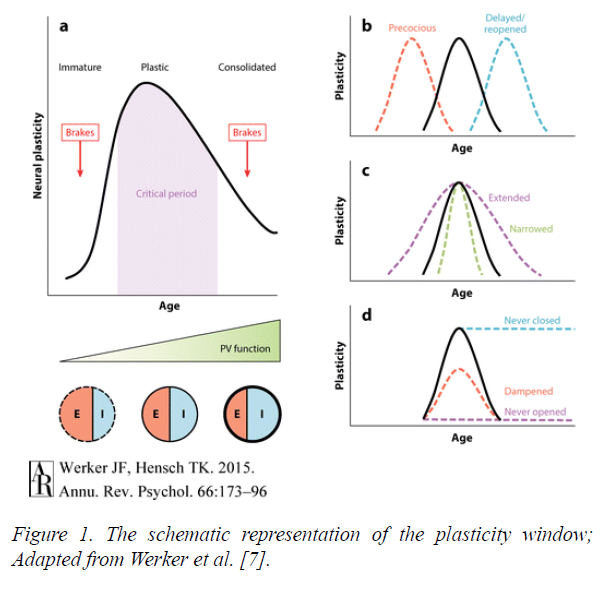

The models have been synthesized to create a modern image of AP genesis, in which a person with the correct genetic make-up goes through a ‘critical period’ (CP) of early development. The idea of a CP for neural plasticity is one that is well established in other sensory modalities, such as vision.

In Figure 1 the schematic a shows this period with the ‘brakes’ placed on plasticity before and after.

Figure 1: The schematic representation of the plasticity window; Adapted from Werker et al. [7].

AP should not be seen as totally synonymous with musicality. Experiments in which listeners with and without AP had to identify pitch relations showed some AP listeners are relatively poor in a tonal context. Instead they stick to absolute pitch even when relative pitch is required [8]. Other experiments are contradictory, showing musicians performance in AP tests was highly correlated with musical dictation, not requiring AP. This suggests AP is associated with proficiency in musical tasks even if they are predominantly based on RP [9].

Scientists have used AP cases as insights into how neural networks develop during musical training. Multiple imaging modalities have been used to compare those with AP and RP, with nonmusicians, and are discussed below.

Discussion

The invention of the magnetic resonance imaging (MRI) in the 1970s was a paradigm shift in neural imaging. The accurate resolution allows distinction between grey and white matter, whilst the absence of radiation makes it ethical for research.

MRI studies have been used to grossly compare the musical and non-musical brain. It has shown that professional keyboard players have larger cerebellums than controls. There was no difference in the overall brain volumes [10].

Musicians display increased leftward asymmetry of the planum temporale (PT). This is a triangular area of the superior temporal gyrus known to be physiologically larger on the left [11]. The difference is due to a reduction in size of the right PT in musicians, suggesting that a process of selective cell death takes place during training [12].

Similar work using MRI and diffusion tensor imaging compared musicians with and without AP. Whole brain cortical thickness and tract based spatial statistics (TBSS) were performed showing increased cortical thickness in a number of areas including the superior temporal gyrus bilaterally, and the left inferior frontal gyrus [13].

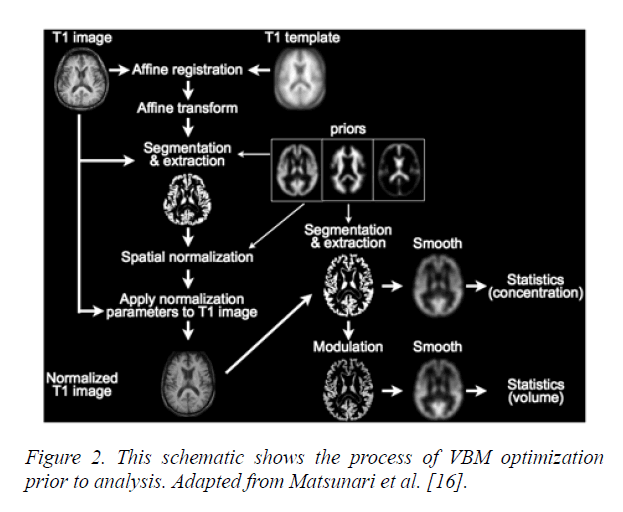

Voxel-Based Morphometry (VBM) (Figure 2) is a method of neuroimaging statistical analysis that uses registration to create a template of a large number of brains. It has shown grey matter volume differences in the motor auditory and visualspatial brain regions between professional musicians and lay people [14]. However, the technique must be used with caution as it is limited in characterizing morphological differences between groups. It is useful for highlighting localized and linear group differences, but is unable to pick up the same disparities when subtle or spatially complex [15].

Figure 2: This schematic shows the process of VBM optimization prior to analysis. Adapted from Matsunari et al. [16].

Whilst continuing improvements in MRI technology offer more accurate resolution it can only ever provide static images that display basic anatomical structure.

The invention of functional magnetic resonance imaging (fMRI), in the 1990s, allowed the acquisition of functional, as well structural information. The technique uses blood oxygen level dependent (BOLD) as contrast to localize task related modulations in activity. It displays the accurate spatial resolution of MRI and with standard techniques temporal resolution is up to a few seconds.

fMRI comparison of AP and non-AP musicians display a network exclusive to the AP group, between the right PT, the secondary somatosensory and premotor cortices. The experimenters suggest that the right-hemispheric network mediates AP perception whilst the labeling of pitch takes place in the left hemisphere [17].

Position emission tomography (PET) utilizes a radioactive glucose analogue, fluorodeoxyglucose (FDG). Its decay, at sites of metabolism gives off a pair of detectable gamma rays. The images created can be superimposed on a previously taken CT or MRI image in a process known as ‘registration’. It creates an unwanted source of error, as the patient may reposition themselves slightly between the two images being taken. Performing PET and CT measurements within the same system without the patient moving minimizes this problem. PET only produces a single image every 40 seconds, so is unable to show the rapid brain interactions seen with fMRI.

PET has been used to measure cerebral blood flow whilst presenting musical tones to subjects with and without AP. Whilst, both groups showed a similar pattern of increased cerebral blood flow in the auditory cortical areas, an increased activity was observed in the right inferior frontal cortex in controls, but not in AP subjects [18]. This area is known to be involved in working memory, suggesting those with AP do not require working memory for pitch perception. Overall the conclusion reached is that AP does not rely on specific underlying brain architecture, instead on recruitment of specialized neural networks for verbal tone association.

Other modalities look at the electrical activity of the neurons. Electro-encephalography (EEG), picks up the electrical activity of multiple neurons using scalp electrodes. It uses the electrical potentials detected to recreate a source model.

When the EEG response is time locked to a stimulus, the response is called event related potentials (ERPs). EEG provides a radiation free mechanism for analyzing neuronal activity, with excellent temporal resolution of milliseconds. The major drawback is poor spatial resolution, due to the unpredictability conductivity of the overlying tissues.

It has been used to compare RP and AP, the results show that the neuronal processes associated with AP occur earlier and more automatically than those for RP [19].

Magenetoencephalography (MEG) uses the magnetic field created by the electrical activity of neuron. It is as non-invasive and simple as EEG, whilst displaying greater temporal resolution. Unfortunately, due to the electrodes needing to be kept at near absolute zero, to detect the tiny magnetic currents the modality is exceptionally expensive to run and maintain.

For both EEG and MEG, the methods used require ‘lead fields’. These are sensitivity maps of the head volume for each sensor used in the measurements. Neither gives anatomical information, a problem which can be overcome by overlaying the information onto previously taken MRIs.

MEG has been used to show that there is a difference in hemispheric lateralization in musicians. It contradicted the previous consensus that music processing is right hemisphere dominant. MEG studies used mismatch fields, measured from 8 musicians and non-musicians in oddball tasks. In the nonmusician cohort the right hemisphere was dominant. However, musicians showed symmetrical mismatch fields amplitudes bilaterally. VBM applied to the same cohort did not show any group differences. The results argue for musical training altering the functional pitch dominance of the hemispheres without changing neural structures [20].

The modality has been used to show that relative pitch can be subcategorized into fundamental and spectral pitch perception. A cohort of 420 subjects including professional musicians all displaying RP were divided by dominant perception method. One subgroup had MRI and MEG taken during pitch perception tasks. The results showed a clear correlation between lateralization of grey matter in Heschl’s gyrus with the mode of pitch perception. Those who perceived pitch spectrally showed right-sided bias. Those who decoded pitch fundamentally showed a left sided bias [21].

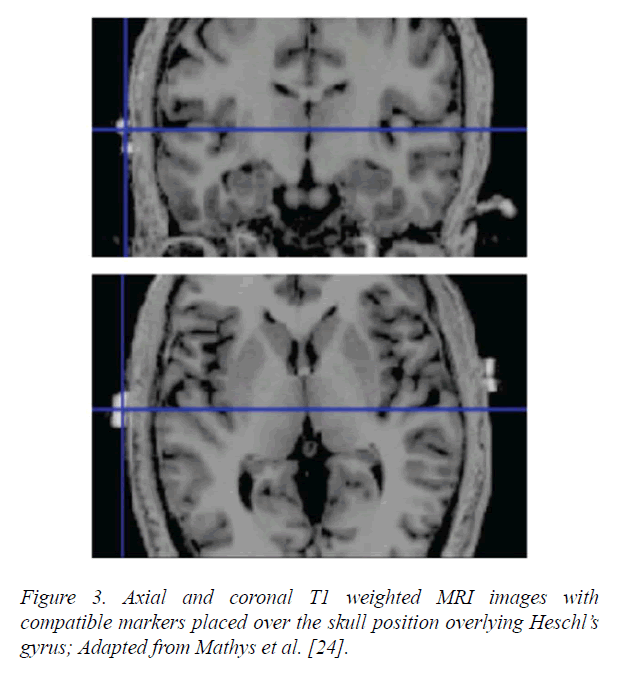

A recent advance is the ability to stimulate the brain at the same time as imaging it using transcranial direct current stimulation (tDCS). The stimulation can up regulate or downregulates neuronal activity depending on whether or not it is cathodal or anodal. This technique has shown that both the left and right Heschl’s gyri modulate pitch discrimination, with the strongest response seen in the right auditory cortex (Figure 3).

Figure 3: Axial and coronal T1 weighted MRI images with compatible markers placed over the skull position overlying Heschl’s gyrus; Adapted from Mathys et al. [24].

Further experiments using tDCS confirm the importance of the right auditory cortex in pitch learning. Participants, trained musically for three days while pitch discrimination thresholds were measured. The experimenters administered anodal, cathodal or sham tDCS to three separate groups over the right auditory cortex during the second day. The sham and cathodal groups showed reduced pitch thresholds, whilst the anodal stimulation caused a blocking effect on pitch learning [22].

tDCS has been used to show that the role of supramarginal gyrus (SMG) changes with musical training. In musicians, cathodal stimulation over the left SMG had no effect. However, in non-musicians tDCS over the left SMG led to a deficit in pitch memory. This experiment reaches the interesting conclusion that a cortical area has lost a function through musical training [23].

Just as AP has provided an incisive mechanism as to how pitch perception is processed, the study of dysfunction can be illuminating. ‘Amusia’ describes a musical disorder characterized by a defect in pitch processing, it can be congenital or acquired, and is a common occurrence following ischaemic middle cerebral artery strokes [25]. VBM studies in the amusic brain, showed a reduction in white matter in the right inferior frontal gyrus, supporting the importance of the area in pitch perception [26].

Overall, the imaging research into the neural underpinnings of musicality has advanced exponentially over the last 30 years. Many key neural areas have been identified as being of importance in music recognition, for example, the right and left plenum temporal. However, it is increasingly clear that the neural changes that occur with training are subtle. Many changes are too small to be detected by anatomical analysis and instead occur at the level of neural patterning. As the spatial and temporal resolution of imaging techniques improve further, our understanding will improve.

Speech recognition

There is a clear association between musical training, and speech recognition. fMRI studies have shown that different haemodynamic responses are induced in AP compared with RP, and non-musicians. The AP activation response is stronger in the posterior part of the middle temporal gyrus and weaker in the anterior mid-part of the superior temporal gyrus. The pattern is heavily influenced by the auditory acuity of AP [27].

By varying the speech stimuli the group demonstrated repeatable activation differences between the two groups. Overall the results suggest that expertise in pitch processing influences speech perception and sentence meaning.

As discussed, AP has been associated with morphological changes in the PT, which is an area known to be involved in speech perception. fMRI techniques have been used in professional musicians and controls to display the contribution of the left PT to the categorization of consonant-vowel syllables, and their analogues. Musicians showed enhanced blood flow in the left PT, and superior discrimination levels. This work supported their preexisting hypothesis that the PT is crucial to the perception of rapidly changing auditory patterns whether they are in language or music [28].

Auditory brainstem responses have been used to compare children who are musicians, and non-musicians. The outcomes show that musicians have an advantage in processing speech in noise. The group proposed that training creates an improvement in cognitive abilities that creates a top down cortical control of perception [29].

However, other experiments raise the possibility that the relationship between music and language is not causal. A group of AP and non-AP musicians was given a series of visual and auditory visual digit span tests. AP possessors performed significantly better on the auditory but not the visual tests. The authors speculate that a large auditory memory may develop early in life. This allows the association between pitches and their names, promoting AP development [30].

Furthermore, cross cultural studies imply that the causal relationship may run the other way. In a study comparing American and Chinese speaking music students, AP was far more prevalent amongst the Chinese [30]. The difference may originate from the tonal nature of Mandarin, in which pitch conveys word meaning. The American students were broken down further by ethnicity, and whether they spoke a tonal language. Of the American Chinese students the performance on an AP test was higher amongst those who spoke a tonal language fluently. Performance levels of those who spoke only fairly fluently or not at all, did not differ significantly from that of Caucasian students [31].

Future experimental opportunities

Finally, I will now discuss future experimental paradigms with the potential to further increase understanding.

Experiments, in mice show that visual plasticity is influenced at the cellular level by epigenetic changes such as histone acetylation and phosphorylation, which influence gene transcription. Experimenters have been able to show that by stimulating adult mice with trichostatin (an antifungal agent), they were able to reestablish ocular plasticity.

It has been shown that valproate, a histone deacetylase inhibitor, can perform a similar task in the auditory system. In mice the drug has been used to reopen the critical period for music preference. When music was played between postnatal days 15 and 24 it reverses the murine bias for silent shelter.

Normally mice reaching adulthood before musical exposure cannot be influenced by hearing music later in life. However, acute valproate treatment causes mice to reopen their CP [32]. Similarly in humans, it has been shown that normal male volunteers perform significantly better on a test of AP after a fortnight of valproate treatment [33]. Theoretically, the drug may display the ability to reopen the critical period for musicality. Presently, valproate has a significant side effect profile making is unethical to give to healthy volunteers. In the future pharmacological advance may make it possible to influence the epigenetic markers accurately and cleanly. This will open the possibility of developing ways to influence neural plasticity, to achieve scientific advance, and potentially improve our ability to learn new skills later in life.

By combining valproate, or a modern enantiomer, with one of the new imaging modalities previously discussed, an exciting and powerful new experimental paradigm could be created which has the potential to analyse neural changes as they are occurring rather than in retrospect. If combined with tDCS techniques training could be modulated as it is occurring during the reopened critical phase of learning to see how the neural networks are laid down in precise fashion.

Conclusion

It is clear that both speech recognition and music have an overlapping skill set, both reliant on rhythm and pitch perception. They share an underlying neural developmental framework. However, it remains unclear as to whether musicality has a causal relationship on speech recognition or whether the association is mediated by other developmental mechanisms.

References

- Abraham O. The absolute tone consciousness. Psychological-musical study. Anthologies of The International Music Society. 1901:3(H1):1-86.

- Chin CS. The development of absolute pitch: A theory concerning the roles of music training at an early developmental age and individual cognitive style. Psychology of Music. 2003;31(2):155-71.

- Saffran JR, Griepentrog GJ. Absolute pitch in infant auditory learning: evidence for developmental reorganization. Developmental Psychology. 2001;37(1):74.

- Baharloo S, Johnston PA, Gitschier J, et al. Absolute pitch: an approach for identification of genetic and nongenetic components. The American Journal of Human Genetics. 1998;62(2):224-31.

- Theusch E, Basu A, Gitschier J. Genome-wide study of families with absolute pitch reveals linkage to 8q24. 21 and locus heterogeneity. The American Journal of Human Genetics. 2009;85(1):112-9.

- Ross DA, Marks LE. Absolute pitch in children prior to the beginning of musical training. Annals of the New York Academy of Sciences. 2009;1169(1):199-204.

- Werker JF, Hensch TK. Critical periods in speech perception: new directions. Annual Review of Psychology. 2015;66:173-96.

- Miyazaki KI. Absolute pitch as an inability: Identification of musical intervals in a tonal context. Music Perception: An Interdisciplinary Journal. 1993;11(1):55-71.

- Dooley K, Deutsch D. Absolute pitch correlates with high performance on musical dictation. The Journal of the Acoustical Society of America. 2010;128(2):890-3.

- Hutchinson S, Lee LH, Gaab N, et al. Cerebellar volume of musicians. Cerebral Cortex. 2003;13(9):943-9.

- Shapleske J, Rossell SL, Woodruff PW, et al. The planum temporale: a systematic, quantitative review of its structural, functional and clinical significance. Brain Research Reviews. 1999;29(1):26-49.

- Keenan JP, Thangaraj V, Halpern AR, et al. Absolute pitch and planum temporale. Neuroimage. 2001;14(6):1402-8.

- Dohn A, Garza-Villarreal EA, Chakravarty MM, et al. Gray-and white-matter anatomy of absolute pitch possessors. Cerebral Cortex. 2013;25(5):1379-88.

- Gaab N, Gaser C, Zaehle T, et al. Functional anatomy of pitch memory—an fMRI study with sparse temporal sampling. Neuroimage. 2003;19(4):1417-26.

- Davatzikos C. Why voxel-based morphometric analysis should be used with great caution when characterizing group differences. Neuroimage. 2004;23(1):17-20.

- Wengenroth M, Blatow M, Heinecke A, et al. Increased volume and function of right auditory cortex as a marker for absolute pitch. Cerebral Cortex. 2013;24(5):1127-37.

- Matsunari I, Samuraki M, Chen WP, et al. Comparison of 18F-FDG PET and optimized voxel-based morphometry for detection of Alzheimer's disease: aging effect on diagnostic performance. Journal of Nuclear Medicine. 2007;48(12):1961-70.

- Zatorre RJ, Perry DW, Beckett CA, et al. Functional anatomy of musical processing in listeners with absolute pitch and relative pitch. Proceedings of the National Academy of Sciences. 1998;95(6):3172-7.

- Itoh K, Suwazono S, Arao H, et al. Electrophysiological correlates of absolute pitch and relative pitch. Cerebral Cortex. 2004;15(6):760-9.

- Ono K, Nakamura A, Yoshiyama K, et al. The effect of musical experience on hemispheric lateralization in musical feature processing. Neuroscience Letters. 2011;496(2):141-5.

- Schneider P, Sluming V, Roberts N, et al. Structural and functional asymmetry of lateral Heschl's gyrus reflects pitch perception preference. Nature Neuroscience. 2005;8(9):1241.

- Matsushita R, Andoh J, Zatorre RJ. Polarity-specific transcranial direct current stimulation disrupts auditory pitch learning. Frontiers in Neuroscience. 2015;9:174.

- 23. Schaal NK, Krause V, Lange K, et al. Pitch memory in nonmusicians and musicians: revealing functional differences using transcranial direct current stimulation. Cerebral Cortex. 2014;25(9):2774-82.

- Mathys C, Loui P, Zheng X, et al. Non-invasive brain stimulation applied to Heschl's gyrus modulates pitch discrimination. Frontiers in Psychology. 2010;1:193.

- Sarkamo T, Tervaniemi M, Soinila S, et al. Cognitive deficits associated with acquired amusia after stroke: a neuropsychological follow-up study. Neuropsychologia, 2009;47(12):2642-2651.

- Hyde KL, Zatorre RJ, Griffiths TD, et al. Morphometry of the amusic brain: a two-site study. Brain. 2006;129(10):2562-70.

- Oechslin MS, Meyer M, Jancke L. Absolute pitch—Functional evidence of speech-relevant auditory acuity. Cerebral Cortex. 2009;20(2):447-55.

- Elmer S, Meyer M, Jäncke L. Neurofunctional and behavioral correlates of phonetic and temporal categorization in musically trained and untrained subjects. Cerebral Cortex. 2011;22(3):650-8.

- Strait DL, Parbery-Clark A, Hittner E, et al. Musical training during early childhood enhances the neural encoding of speech in noise. Brain and language. 2012;123(3):191-201.

- Deutsch D, Henthorn T, Marvin E, et al. Absolute pitch among American and Chinese conservatory students: Prevalence differences, and evidence for a speech-related critical period. The Journal of the Acoustical Society of America. 2006;119(2):719-22.

- Deutsch D, Dooley K, Henthorn T, et al. Absolute pitch among students in an American music conservatory: Association with tone language fluency. The Journal of the Acoustical Society of America. 2009;125(4):2398-403.

- Yang EJ, Lin EW, Hensch TK. Critical period for acoustic preference in mice. Proceedings of the National Academy of Sciences. 2012;109(Supplement 2):17213-20.

- Gervain J, Vines BW, Chen LM, et al. Valproate reopens critical-period learning of absolute pitch. Frontiers in Systems Neuroscience. 2013;7:102.