Research Article - Journal of Psychology and Cognition (2017) Volume 2, Issue 1

Differentiating recognition for anger and fear facial expressions via inhibition of return.

Sun Juncai1*, Zhao Jing1, Shi Rongb2

1Department of Psychological Sciences, Qufu Normal University, China

2Department of Psychological Sciences, Nanji Normal University, China

- *Corresponding Author:

- Sun Juncai

Department of Psychological Sciences

Qufu Normal University No. 57

Jingxuan West Road China

Tel: 15865701660

E-mail: sunjuncai266@163.com

Accepted date: December 22, 2016

DOI: 10.35841/psychology-cognition.2.1.10-16

Visit for more related articles at Journal of Psychology and CognitionAbstract

The goal of this study was to find out whether two negative emotions—fear and anger, known for their attention grabbing capacity-are equally prone to the detection cost or habituation of attention as measured by IOR. This study adopted the more ecological meaning of the video screenshots as target stimulus; the finding was that anger relative to fear could override the IOR effect. We discussed this differentiation according to the two cognitive systems one evolved for mastery of the natural environment, the other for purposes of social harmony in the evolutionary framework, anger poses a social/relational threat, whereas fear refers to threat that comes from the environment.

Keywords

Inhibition of return, Anger, Fear, Types of cognition.

Introduction

Fear and anger

In the evolutionary framework, anger signals a social/ relational threat, whereas fear signals threat that comes from the environment. For example, Leidner and Li provide a review of different approaches to intergroup violence (AIVs) and found that there is a relationship between intergroup violence and anger [1]. And Belus et al. argued that the young women’s IPV (intimate partner violence) perpetration appears more closely related to their emotional responses, in particular anger. In short, anger is a risk factor for IPV perpetration and intergroup violence [2,3].

Fear is based on the survival circuits that seem to be universal [4]. It is a defense mechanism evolved to protect animals from danger by balancing the animals’ needs for primary resources with the risk of predation. Correct assessment of risks and costs of foraging is vital for the fitness of foragers. Foragers should avoid predation risk and balance missed opportunities [5]. There is the neuroscientific evidence for defensive avoidance of fear appeals [6].

Theories of emotion processing suggest that emotional information, and particularly threat-related stimuli, enjoy a processing advantage [7] and are prioritized in the competition for attention [8]. Attentional capture is less likely to habituate for threatening information [9]. Emotion researchers often categorize angry and fearful face stimuli as “negative” or “threatening”. However successful interpretation of both types of facial expression helps motivate an individual to identify and avoid the source of threat, thereby leading to a higher probability for the species’ survival [10]. Cognitive and behavioral studies have supported differences in aspects of information processing for fear and anger expressions. Perception of fear and anger appears to be mediated by disassociate neural circuitries and often elicit distinguishable behavioral responses. Springer et al. suggested that while anger and fear faces convey messages of “threat”, their priming effect on startle circuitry differs, in that viewing faces of anger was associated with a heightened startle eye blink reflex [11]. From the point of view of development, Kobiella et al. showed that babies of seven months has been able to distinguish the social meaning expressed by anger and fear faces, rather than just marked as negative information [12]. To the baby, fear face can make them more uncomfortable and produce a stronger state of awakening.

Some researchers have described an anger superiority or “pop out” effect, whereby angry expressions (vs. other types of facial emotions such as happiness or neutrality) are more efficiently detected in a sea of discrepant facial emotions during visual search [13]. Maratos also found angry faces to be associated with both the rapid capture and rapid release of attention. But Becker et al. found the reverse effect, that happy faces, not angry faces, are more efficiently detected in single- and multiple-target visual search tasks [14]. Huang et al. indicated that a task irrelevant angry face can capture attention beyond top-down control, but this effect is modulated by the availability of attentional resources [15]. Viewed from the evolutionary perspective, fear is central to mammalian evolution. As a product of natural selection, it is shaped and constrained by evolutionary contingencies [7]. In a functional-evolutionary perspective, fear prioritization effect is also investigated, and Bram et al. concluded that there is ample evidence for the existence of cognitive biases in fear [16].

Taken together, findings from a variety of studies spanning functional neuroimaging, neuropsychology, and behavioral or cognitive science approaches suggest early views that broadly classify angry and fearful facial expressions as “negative” may be overly simplistic. Rather, fear and anger expressions may convey signals that are distinct in neural circuitries and elicit distinguishable behavioral responses. As Izard pointed out, emotion feelings stem from evolution and neurobiological development, not from conceptual acts [17]. Ledoux also shifted research from emotions to threat response, and he said although our conscious experiences may vary considerably, underneath are these universal survival circuits that operate implicitly, but similarly, in each of us [4]. From the perspective of evolution, The differential effects of these two facial threat signals on the defensive motivational system adds to growing literature highlighting the importance of distinguishing between emotional stimuli of similar valence, along lines of meaning and functional impact.

Two types of cognition

Building on the notion of cognition as adaptive systems shaped by environmental demands and life experiences, Sundararajan argued that there are two types of cognition— relational and non-relational [18]. This concept consistent with the Bloom’s claimed that there are two independently evolved systems for reasoning about the world one for the physical world and the other for the social world [19]. The former is needed for social bonding among con-specifics, the latter mastery and control of the world.

Yin et al. found that social information can drive perceptual grouping of objects in dynamic chase. This and other findings suggest that social information is involved at an early perceptual stage [20]. Similarly Ledoux documented the early processing of fear response prior to conscious feeling states [4]. To investigate the difference in cognition between fear and anger at the early perceptual stage, we use Inhibition of Return (IOR).

Inhibition of return

In exogenous spatial cueing, the typical pattern of results is an early facilitation followed by inhibition. That is, at short stimulus onset asynchronies (SOAs), reaction time (RT) for valid trials (i.e., target and cue presented at the same spatial location) is faster than for invalid trials (i.e., target and cue presented at opposite locations). At longer SOAs, however, RT is slower for valid than for invalid trials. The latter effect was termed inhibition of return (IOR) and has been the focus of research since it was discovered by Posner and Cohen. The inhibition of return (IOR) occurs in the aftermath of oculomotor activation and is a long-lasting response bias that affects overt and covert orienting, which has the novelty seeking function [21,22]. Researchers agree that IOR is a reaction hysteresis phenomenon to return attention to the object or position that has already noticed, which is a important ability of control irrelevant position to improve the efficiency of search [23,24]. Therefore IOR is bound to both retinotopic and object-centered locations, defined as a specific location within the boundaries of a single object [25].

Mills et al. measured delay effect (an effect attributable to inhibition of return (IOR) and found this effect may be task-specific, suggesting that gaze control parameters are task-relevant and potentially affected by task-switching [26]. Therefore the goal of this study is to find out whether two negative emotions—fear and anger, known for their attention grabbing capacity - are equally prone to the detection cost or habituation of attention as measured by IOR. Because IOR is basically measuring hard-wired mechanisms, it can shed light on the cognitive systems of fear and anger at the early perceptual stage.

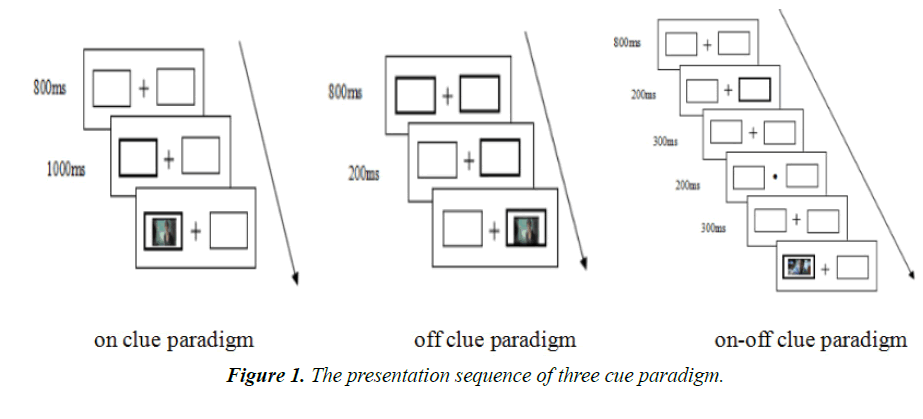

There are three cue paradigms in IOR experiment: on cue, off cue and on-off cue [27], the paradigm of on cue refer to cue appear all of the time during the experiment, while the paradigm of off cue refer to cue has been existed at the beginning of the experiment and disappeared in the experimental process; however the paradigm of on-off cue refer to on cue and off cue appear in one experiment meanwhile, and the disappear of on cue and off cue also have synchronicity [28]. Existing researches show that adopting different ways of cues will change the effect of IOR. In Riggio et al. study, they tested IOR by introducing the three cue paradigms and found the account of IOR is roughly equal in on cue paradigm and off cue paradigm, but in on-off cue paradigm the account of IOR is larger than the combination amount of IOR of other cue paradigms [29].

The goal of this study is to find out whether two negative emotions--fear and anger, known for their attention grabbing capacity - are equally prone to the detection cost or habituation of attention as measured by IOR. In order to accurately illustrate the differentiated characteristics of anger and fear facial expression and the IOR of anger and fear facial expression in three cue conditions, we use dynamic video screenshots as target stimuli in the cue-target paradigm. In addition, the interval of cue-to-target onset asynchrony (CTOA) is 1000 ms [30]. We hypothesized that the IOR of anger and fear facial expression will appear adaptive changes in three cue conditions due to the differentiated meanings of threat.

Experiment

Materials

In summarizing problems about facial expression recognition research, materials truthfulness and ecological effect have been questioned. As Wechsler et al. pointed, used Static facial emotional images(like photos)as stimulus, in addition to let people doubt the ecological validity of the study design, also due to stimulation materials lack of substantial cues which can distinguish between differences expressions, lead to a poorer performance of facial expression recognition [31]. Kilts et al. formed the perspective of neuroimaging, found the differentiation of neural pathways during recognize static expressions and dynamic expression, that is because static expression is a typical representative of mental events, will lead to both the recognizing strategies and brain regions activated have differences [32]. Colle and Del Giudice found using dynamic video instead of the static image can increase the emotional recognition accuracy [33]. In the dynamic facial expressions, people can systematically make use of different aspects of dynamic expressions to control or manipulate other cues to enhance the accuracy of perception, such as to determine the position of the head and the direction of gaze, all of which may affect speculation of the nature of the mood [34,35] pointed out, facial expression classification should be a dynamic process, rather than be a static process. That is because facial expression is part of a sequence, muscle combined with emotions in a moment of coexistence of facial expression in this sequence. Du et al. confirmed the feasibility and credibility of dynamic expressions can be used in experimental study [36].

We captured 568 dynamic video screenshots of anger and fear facial expression of Chinese adults used media player software of CorePlayer and KanKan. In the video and thunderbolt see video playback software. The pictures are clear and the ratio of gender is quite, then the pictures were standard processed by the software of MeiTu. Finally, 28 undergraduate students (14 males) participated in the evaluation of emotional type and intensity with 5 level score and 72 pictures were selected, the accurate rate of these pictures between 50% to 75% and the intensity of these two emotional expressions had no significant differences (Mfear=4.06, SD=0.22; Manger=4.11, SD=0.17, t (1.27)=1.65, p=0.11).

Participants

34 undergraduate students (17 males, 17 females; median age 21.26 years, SD=2.34) participated in this experiment at Qufu Normal University. Participants’ vision or corrected visual acuity was normal and they all right handedness without the history of neurological diseases and mental health problems. Each participant received a present after completing the experiment.

Design

2 (gender:male、female) × 3 (cue paradigm:on cue, off cue, on-off cue) × 2 (cue validity: valid, invalid) × 2 (target emotion: anger, fear) analysis of variance (ANOVA) factorial design was used. Gender was a between-subjects factor, and cue paradigm, cue validity and target stimulus were within-subjects factors. Participants were asked to identify the emotional type of target stimulus and in the meanwhile response time and accuracy will be record.

Procedure

The experiment was run by the E-prime software. Participants completed tasks under the three cues in a random order, and participants can rest for 5 min after completed each task. The process of task included present instruction, practice stage and experiment stage. The instruction was as follows. First, a central fixation point was presented in the middle of two boxes. Participants were instructed to focus on this point throughout. Participants need to identify the type of the expression quickly and accurately when facial expression presenting in box. If the facial expression is fear, participants need press the "F" button and if the facial expression is anger, participants need press the "K" button. The presentation sequence of three cue paradigms is shown in Figure 1.

Results

The identification accuracy of each participant is above 90% and the accuracy of each variable was not significant in three cue paradigms.

The reaction time of expression recognition in different experimental conditions

As shown in Table 1, the analysis of the RT data revealed that the main effect of gender approached significance, and the response of female participants tended to be faster than male participants in the three cue conditions. The main effect of target stimulus was significant and the response of fear facial expression tended to be faster than anger facial expression. The main effect of cue validity was significant, and participants responded slower to the cued targets than to the uncued targets. This indicated that the IOR appeared in cued position and the facilitation appeared in uncued position.

The magnitude of IOR of expression recognition in different experimental conditions

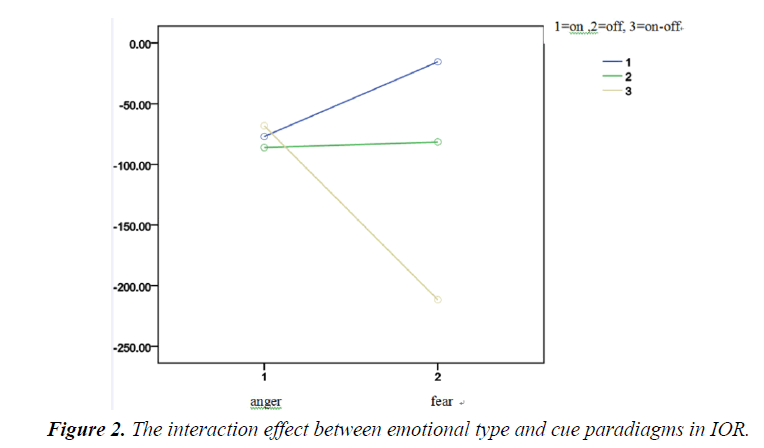

As shown in Table 2, the analysis of the magnitude of IOR in different conditions revealed that the main effect of gender, emotional type and cue validity was not significant. But the interaction effect between emotional type and cue paradigms was significant, F(1,31)=4.211, p=0.019, ηp2;=0.113. The simple effect analysis revealed that there was no difference in the magnitude of IOR of anger in three cue paradigms, but in the on-off cue paradigm the magnitude of IOR of fear is significantly greater than the other two cues. This indicated that when identifying the interpersonal interdependent emotion, the effect of cues paradigm on IOR is smaller, while identifying fear which originated in the evolution of species, the effect of cues paradigm on IOR is bigger and there was strongest IOR in on-off cue paradigms (Figure 2).

Discussion

Relative independence between recognition advantage and IOR

The recognition of fear expressions is significantly faster than angry expressions under the three cue paradigms. This showed the recognition of fear expressions had a priority compared with anger expressions. Öhman and Mineka developed a concept of an evolutionarily evolved fear module to explain important aspects of human fear, fear events and situations that provided threats often relative to the survival of our ancestors [7]. But Anger often has relevance to the aggression. Battaglia et al. found that for the 8 to 11 years old, the recognition accuracy of anger is lower than fear [37]. Although this study did not find this difference in accuracy, the reaction time of anger is longer than fear. This result may be due to the participants in this study are young college students who may have a good recognition ability of anger expression. Derek et al.’s research also found in the groups of young, middle-aged and elderly, young group’s recognition accuracy of anger is highest [38]. Individual traits affect human vigilance, hence may affect the time course of processing angry faces. Rohner’s study showed that high trait anxiety participants were looking at angry faces for longer time [39].

Consistent with previous research results, we found female’s recognition of anger and fear expression to be significantly faster than male [33,40]. Hall et al. explained this prior attention mechanism, and they found female giving more attention and scan to eyes the center of face region [41]. In our experiment, we used dynamic video screenshots as stimulus material, so participants could get rich eye information and showed identify advantage. The variables of emotional type and gender all had identical advantage, but the magnitude of IOR of these two variables is different. This indicated that the psychological process of IOR is orthogonal to recognition, and the inhibition to cued location was not eliminated although there was an identical advantage.

| Â On cue paradigms | Â off cue paradigms | Â On-off cue paradigm | |||||

|---|---|---|---|---|---|---|---|

| Â Valid cue | Invalid cue | Â Valid cue | Invalid cue | Â Valid cue | Invalid cue | ||

| Female | Anger | 1720.01 (537.41) |

1644.61 (539.59) |

1701.40 (365.46) |

1639.41 (418.92) |

1511.69 (325.06) |

1505.32 (357.55) |

| Fear | 1637.49 (584.47) |

1624.16 (557.09) |

1605.22 (343.31) |

1561.64 (271.80) |

1506.07 (396.03) |

1305.22 (179.92) |

|

| Male | Anger | 2193.34 (646.60) |

2114.67 (616.64) |

1959.63 (364.54) |

1849.45 (364.90) |

1844.34 (482.57) |

1714.41 (408.36) |

| Fear | 1995.75 (552.44) |

1978.12 (559.97) |

1866.78 (358.28) |

1747.44 (301.39) |

1650.98 (418.92) |

1428.66 (266.57) |

|

| Participants gender (F, sig) | 4.676 (0.038) | 4.259 (0.047) | 4.281 (0.047) | ||||

| Emotional types (F, sig) | 7.098 (0.012) | 7.798 (0.009) | 13.218 (0.001) | ||||

| Cue validity (F, sig) | 32.625 (0.000) | 12.891 (0.001) | 7.792 (0.009) | ||||

Table 1. Mean RT and ANOVA of fear and anger facial expression in three cue paradigms.

| Participants gender | Emotional types | Â On cue paradigms | Â Off cue paradigms | Â On-off cue paradigm |

|---|---|---|---|---|

| Female | Anger | -75.40 (125.53) | -61.99 (219.92) | -6.37 (205.45) |

| Fear | -13.33 (143.70) | -43.58 (158.13) | -200.85 (391.05) | |

| Male | Anger | -78.67 (133.13) | -110.18 (172.77) | -129.93 (431.94) |

| Fear | -17.62 (180.02) | -119.35 (217.45) | -222.32 (415.00) |

Table 2. The mean score and standard deviation of the magnitude of IOR of anger and fear in three cue paradigms.

Cue paradigm and emotional type combined affect IOR

Experiment results shown cue paradigm and emotional type combined affect IOR, namely that the IOR of identifying angry expression is less affected by cue paradigm, while the magnitude of IOR of identifying fear expression is largest in on-off cue paradigm. Because of prepared attention to anger facial expression [42,43], individuals have time to prepare and give more attention to cope with social challenge. And prepared attention to anger facial expression derived from interpersonal dependency can partly weakened the inhibition of cues, so there was a stable but weak effect of IOR in three cue paradigms.

Riggio et al. thought the on-off cue paradigm with cue appearing and disappearing could attract participants more attention and produce stronger inhibition than other two cue paradigms [29]. Our study found that when recognizing the fear expressions, there was a significant increase of the magnitude of IOR under the on-off cues paradigm condition, while recognizing the anger expressions, there was no difference in the magnitude of IOR under the three cue paradigms. This indicated the type of expression could overcome the IOR which is called a hunting promoting mechanism in some condition [44]. As Parks et al. pointed out, the capture occurred regardless of the nature of the distractors, but the extended holding of attention was dependent upon the ongoing distractor context [45]. Participants could maintain the alertness of attention under the three cue paradigms when recognizing the fear expressions, while recognizing the anger expressions, there was an increase of reaction time under the condition of strong inhibition. This suggested that there was a the attention characteristics of differentiation in recognizing the fear expressions and anger expressions, when we adopted dynamic video screenshots which had a good ecological validity rather than expression faces or expression symbols as stimulus materials. And the differentiation appeared in strong inhibition of cues, and there was an increase of reaction time of fear expressions appeared cue position.

The finding is that anger relative to fear can override the IOR effect. Implication of the findings is that the evolutionary function of an emotion is the determining factor in the IOR effect. Anger is evolved to detect social threat, hence needs to override habituation of attention in order to continue to focus on the social cues in the angry face. Fear is evolved to detect danger in the environment, hence takes advantage of habituation to search far and wide in the environment for potential danger [44]. Thus consistent with the distinction drawn by Sundararajan between two cognitive systems one evolved for mastery of the natural environment, the other for purposes of social harmony in the evolutionary framework, anger overrides IOR effect in order to dwell on the social/relational threat, whereas fear takes advantage of habituation in order to search far and wide for threat that comes from the environment [18,19].

Conclusion

In conclusion, fear and anger expressions signal different kinds of threat. This differentiation in threat meaning is present not only in the fact that fear expression is recognized faster than anger expression, but also present in the fact that anger relative to fear can override the IOR effect. These findings give empirical support to the distinction drawn by Sundararajan and others between relational and non-relational cognition [18].

Acknowledgement

We thank Louise Sundararajan for her suggestions for revision.

References

- Leidner B, Li M. How to (re)build human rights consciousness and behavior in post-conflict societies: An integrative literature review and framework for past and future research. Journal of Peace Psychology 2015;21:106-132.

- Belus JM, Wanklyn SG, Iverson KM, et al. Do anger and jealousy mediate the relationship between adult attachment styles and intimate violence perpetration? Partner Abuse 2014;5:388-406.

- Birkley EL, Eckhardt CI. Anger, hostility, internalizing negative emotions, and intimate partner violence perpetration: A meta-analytic review. Clin Psychol Rev 2015;3740-3756.

- Ledoux JE. Feelings: What are they and how does the brain make them? Daedalus 2015;144:96-111.

- Eccard JA, Liesenjohann T. The importance of predation risk and missed opportunity costs for context-dependent foraging patterns. Plos One 2014;9:e94107.

- Kessels LTE, Ruiter RAC, Wouters L, et al. Neuroscientific evidence for defensive avoidance of fear appeals. Int J Psychol 2014; 49:80-88.

- Ohman A, Mineka S. Fears, phobias and preparedness: Toward an evolved module of fear and fear learning. Psychol Rev 2001;108:483-522.

- Vuilleumier P. Facial expression and selective attention. Curr Opin Psychiatry 2002;15:291-300.

- Pérez-Dueñas C, Acosta A, Lupiáñez J. Reduced habituation to angry faces: Increased attentional capture as to override inhibition of return. Psychol Res 2014;78:196-208.

- Darwin C. The expression of the emotions in man and animals. University of Chicago Press, Chicago 1965.

- Springer US, Rosas A, McGetrick J, et al. Differences in startle reactivity during the perception of angry and fearful faces. Emotion 2007;7:516-525.

- Kobiella A, Grossmann T, Reid VM, et al. The discrimination of angry and fearful facial expressions in 7-month-old infants: An event-related potential study. Cognition and Emotion 2008;22:134-146.

- Fox E, Lester V, Russo R, et al. Facial expressions of emotion: Are angry faces detected more efficiently? Cognition and Emotion 2000;14:61-92.

- Becker DV, Anderson US, Mortensen CR, et al. The face in the crowd effect unconfounded: Happy faces, not angry faces, are more efficiently detected in single- and multiple-target visual search tasks. Journal of Experimental Psychology 2011;140:637-659.

- Huang S, Chang Y, Chen Y. Task-irrelevant angry faces capture attention in visual search while modulated by resources. Emotion 2011;11:544-552.

- Bram VB, Bruno V, Helen T, et al. A review of current evidence for the causal impact of attentional bias on fear and anxiety. Psychol Bull 2013;140:682-721.

- Izard CE. Emotion feelings stem from evolution and neurobiological development, not from conceptual acts: Corrections for barrett et al. Perspectives on Psychological Science 2007;2:404-405.

- Sundararajan L. Understanding emotion in Chinese culture. Springer Cham Heidelberg Publishing Swizerland 2015.

- Bloom P. Descartes? baby. Basic Books, New York 2009.

- Yin J, Ding X, Zhou J, et al. Social grouping: Perceptual grouping of objects by cooperative but not competitive relationships in dynamic chase. Cognition 2013;129:194-204.

- Posner MI, Cohen YPC. Components of visual orienting. Attention and performance X, Erlbaum, London 1984;531-556.

- Hilchey MD, Klein RM, Satel J. Returning to "inhibition of return" by dissociating long-term oculomotorior from short-term sensory adaptation and other nonoculomotor "inhibitory" cueing effects. Journal of Experimental Psychology Human Perception & Performance 2014;40:1603-1616.

- Weaver MD, Aronsen D, Lauwereyns J. A short-lived face alert during inhibition of return. Attention Perception & Psychophysics 2012;74:510-520.

- Wang Jingxin, Jia Liping, Bai Xuejunet al. Emotional faces processing takes precedence of inhibition of return: ERPs study. Acta Psychologica Sinic 2013;45:1-10.

- Theeuwes J, Mathôt S, Grainger J. Object-centered orienting and IOR. Attention Perception & Psychophysics 2014;76:1-7.

- Mills M, Dalmaijer ES, Van der Stigchel S, et al. Effects of task and task-switching on temporal inhibition of return, facilitation of return and saccadic momentum during scene viewing. Journal of Experimental Psychology 2015;41:1300-1314.

- Ming Z, Ning L. Experimental paradigms for inhibition of return. Advances in Psychological Science 2007;15:385-393.

- Pratt J, McAuliffe J. The effects of onsets and offsets on visual attention. Psychol Res 2001;65:185.

- Riggio L, Bello A, Umiltà C. Inhibitory and facilitatory effects of cue onset and offset. Psychol Res 1998;61:107.

- Taylor TL, Therrien ME. Inhibition of return for faces. Percept Psychophys 2005;67:1414-1422.

- Wechsler T, Kaiser S, Schmidt S, et al. Studying the dynamics of emotional expression using synthesized facial muscle movements. J Pers Soc Psychol 2000;78:105-119.

- Kilts CD, Egan G, Gideon DA, et al. Dissociable neural pathways are involved in the recognition of emotion in static and dynamic facial expressions. Neuroimage 2003;18:156.

- Colle L, Del Giudice M. Patterns of attachment and emotional competence in middle childhood. Social Development 2011;20:51-72.

- Barrett LF, Kensinger EA. Context is routinely encoded during emotion perception. Psychol Sci 2010;21:595-599.

- Hsu S, Yang L. Sequential effects in facial expression categorization. Emotion 2013;13:573-586.

- Du Jinglun, Yao Zhijian, Xie Zhiping, et al. Neural substrates for explicit recognition of dynamic facial expression by fMRI. Chinese Mental Health Journal 2007;121:558-562.

- Battaglia M. Identification of gradually changing emotional expressions in schoolchildren: The influence of the type of stimuli and of specific symptoms of anxiety. Cognition and Emotion 2010; 24:1070-1088.

- Derek MI, Corinna EL, Richard DL, et al. Age differences in recognition of emotion in lexical stimuli and facial expressions. Psychol Aging 2007;22:147-159.

- Rohner J. The time-course of visual threat processing: High trait anxious individuals eventually avert their gaze from angry faces. Cognition & Emotion 2002;16:837-844.

- Rahman Q, Wilson GD, Abrahams S. Sex, sexual orientation and identification of positive and negative facial affect. Brain and Cognition 2004;54:179-185.

- Hall JK, Hutton SB, Morgan MJ. Sex differences in scanning faces: Does attention to the eyes explain female superiority in facial expression recognition? Cognition & Emotion 2010;24:629-637.

- Belopolsky AV, Devue C, Theeuwes J. Angry faces hold the eyes. Visual Cognition 2011;19:27-36.

- Crowe SE, Wilkowski BM. Looking for trouble: Revenge-planning and preattentive vigilance for angry facial expressions. Emotion 2013;13:774-781.

- Stoyanova RS, Pratt J, Anderson AK. Inhibition of return to social signals of fear. Emotion 2007;7:49-56.

- Parks EL, Kim SY, Hopfinger JB. The persistence of distraction: A study of attentional biases by fear, faces, and context. Psychonomic Bulletin & Review 2014;21:1501-1508.